Last week I built a self-hosted reading server. Four Docker containers, word-by-word audio sync, works from any device. It felt complete. Then I kept thinking.

Every book file has structure. Chapters, sections, paragraphs. Audiobooks have timing data baked into them — word offsets, sentence durations, the pacing a narrator uses when something emotionally significant is happening. Stack those two things and you know, moment by moment, what the book is trying to make you feel.

What if you generated an image for each of those moments?

Not cover art. Not marketing material. One illustration per section, generated from the text itself. A chase scene gets something kinetic. A still morning gets something minimal. A memory gets something slightly washed out. The images are shaped by the words, not the publisher’s art department.

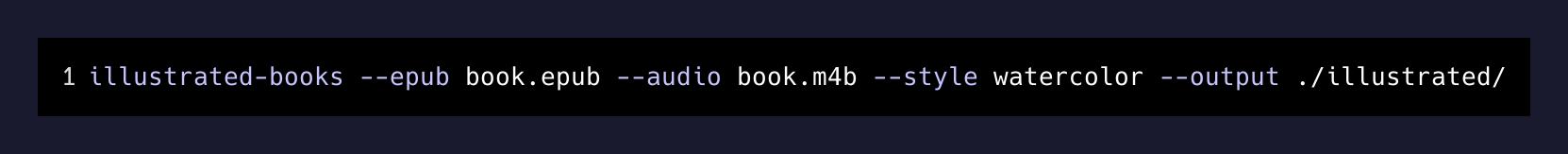

The technical pieces are all available. Text parsing, audiobook alignment, image generation, epub injection. The gap is a tool that chains them cleanly — fast enough that pages render before you get there. I want to read Moby Dick and have it look like a 19th-century oil painting. I want The Road to look like ash and static.

The open-source reading infrastructure that already exists handles the sync layer. Everything else is a pipeline problem.

I’m building this open source. There are more books in the world than illustrators, and there always will be. Most books will never get a visual edition. Not every story fits neatly into what a publisher thinks will sell. But the text is there. The audio is there. The model can read.

That gap doesn’t have to be permanent.

More soon.