I added a CopilotKit AI agent to Freebo last week. The pitch was simple: operators should be able to talk to their system. Type “how much revenue did we make today?” and get a real answer, not a dashboard.

I made a test booking. Five hundred dollars. Then I asked the agent what we’d made.

It said fifty thousand.

The bug is exactly what it sounds like. Freebo stores all money in cents, as integers. It’s a hard rule written into the CLAUDE.md at the root of the project:

Always in cents (integer). 8500 = $85.00.

Never use floating point for money.So a $500 booking is stored as 50000. The database is correct. The accounting layer is correct. Every calculation that touches money uses centsToDollars() or dollarsToCents() from a shared money utility, and nothing leaks raw values across the boundary.

Except, as it turns out, one place did.

The AI agent’s read tools — the functions it calls to pull revenue summaries and booking data — were returning raw API payloads directly to the model. Whatever shape the server handed back, that’s what the agent received. No normalization. No conversion. A field called revenue containing 50000 and the agent, being a language model, looked at that number and said fifty thousand dollars.

It wasn’t wrong, exactly. It just had no way to know the convention.

Here’s the part worth thinking about. The system prompt told the agent:

API stores CENTS. You and the operator speak DOLLARS.

Tools convert at the boundary.The problem is that second sentence was aspirational. The tools didn’t actually convert at the boundary. The instruction described an architecture that hadn’t been built yet.

Language models are surprisingly good at following instructions. They’re also good at filling gaps with their best guess. When a tool returns revenue: 50000 and the system prompt says “you speak dollars,” the model doesn’t notice the inconsistency. It sees a big number, treats it as dollars, and reports accordingly. The model was doing exactly what it was told. The contract just didn’t exist where it needed to.

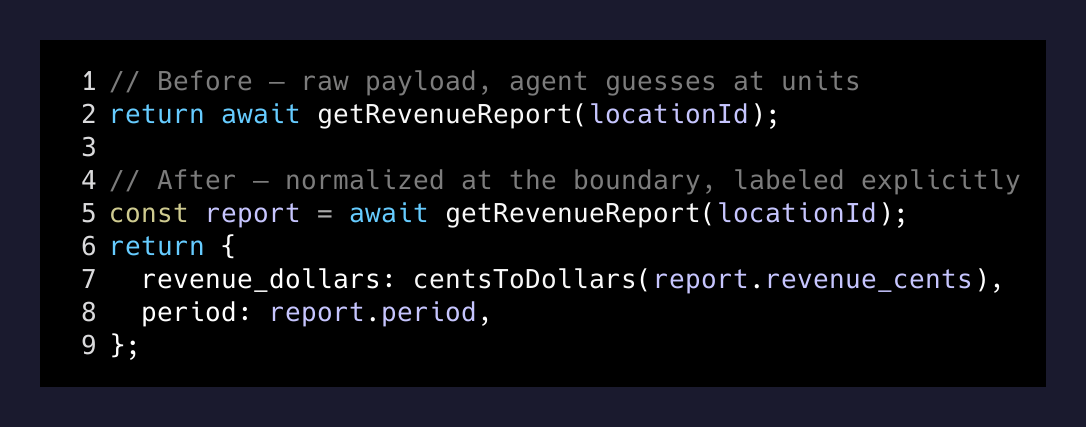

The fix is mechanical but the principle matters.

Every read tool that returns money now calls centsToDollars() before handing the value to the agent:

// Before — raw payload, agent guesses at units

return await getRevenueReport(locationId);

// After — normalized at the boundary, labeled explicitly

const report = await getRevenueReport(locationId);

return {

revenue_dollars: centsToDollars(report.revenue_cents),

period: report.period,

};And the field names carry the unit. Not revenue. revenue_dollars. If a field ends in _cents, divide by 100. If it ends in _dollars, use it as-is. If it’s unlabeled, the system prompt now tells the agent to ask rather than guess.

Small change. The agent hasn’t hallucinated a number since.

The bigger lesson is about contracts between your code and the model.

When you write a function for another developer, there’s an implicit social contract: if you name a field revenue_cents, the other developer will notice the suffix. They’ll look up the type. They’ll find the utility function. They’ll use it correctly because they know what they’re doing.

AI agents don’t have that context. They read what they’re given and reason from there. If you hand an agent an unlabeled number, it will make a confident guess. It won’t stop to ask what unit you’re using. It will tell your operator their $500 tour generated fifty thousand dollars in revenue, and it will sound very sure about it.

The system prompt is not a substitute for correct data. Telling the model to “speak dollars” works only if the tools also deliver dollars. When the instruction and the data disagree, the data wins — but not in the way you’d expect. The model doesn’t flag the discrepancy. It resolves it silently, and usually wrong.

Every boundary between your code and a language model is an API. It deserves the same rigor you’d give any API. Explicit field names. Normalized units. Stable shapes. The model isn’t a developer who can read your code and infer the intent. It reads what you give it. Give it something unambiguous.

The fifty-thousand-dollar booking taught me to treat AI tool outputs like public interfaces. Nothing leaves a read tool without going through the normalization layer first. The model should never have to guess what unit your numbers are in.

If it has to guess, it will get it wrong at the worst possible time.