I audited another telehealth site this week. Same story. Zero JSON-LD anywhere on the page. No “medically reviewed by” bylines on any of the condition guides. The About page claimed “100+ providers” and named exactly zero of them. The blog had 43 posts and no author attributions. The llms.txt returned a 404. Robots.txt was a permissive wildcard with no per-bot rules.

This is not a one-off. It is the default state of telehealth on the web.

Mental health is the most YMYL (“Your Money or Your Life”) category Google indexes. The quality rater guidelines weight E-E-A-T harder there than almost anywhere else. And the sites Google is supposed to be punishing are still ranking, because most of their competitors are just as broken. The ones that fix this stuff first will eat the AI Overviews when the rebalance happens.

Here is the audit I run, in the order I run it, with what I expect to find.

The 30-minute pass

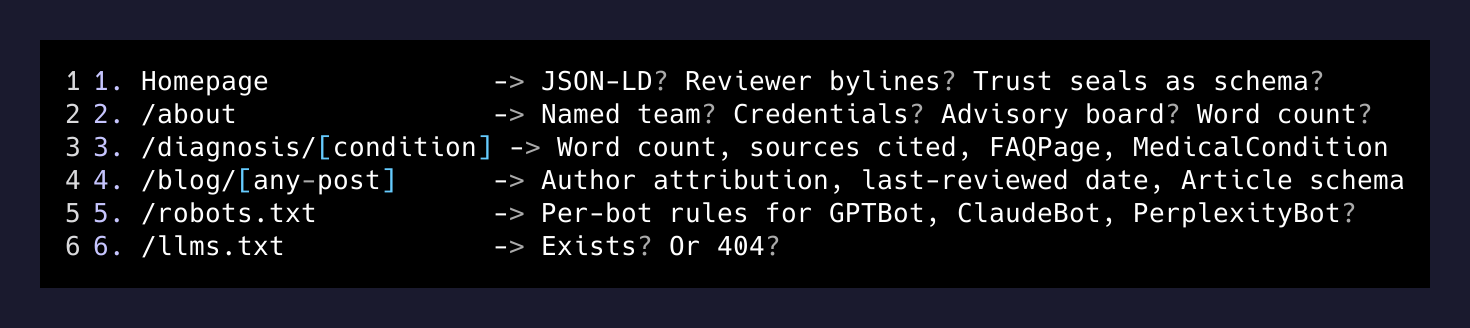

I open six tabs and grade them on a fixed rubric.

1. Homepage -> JSON-LD? Reviewer bylines? Trust seals as schema?

2. /about -> Named team? Credentials? Advisory board? Word count?

3. /diagnosis/[condition] -> Word count, sources cited, FAQPage, MedicalCondition

4. /blog/[any-post] -> Author attribution, last-reviewed date, Article schema

5. /robots.txt -> Per-bot rules for GPTBot, ClaudeBot, PerplexityBot?

6. /llms.txt -> Exists? Or 404?That is it. Six URLs, fifteen minutes, a clear picture of how the site will hold up against Cerebral, Hims, Talkspace, and the big informational sites like ADDitude in 2026 search.

The four gaps that show up every time

No JSON-LD. Not on the homepage, not on the About page, not on any condition page. No MedicalOrganization. No Physician. No MedicalCondition. No MedicalWebPage. No FAQPage. No Review or AggregateRating even when the brand has hundreds of real reviews on Trustpilot. This is the single largest available lift on most of these sites and the one nobody is shipping.

No medical reviewer bylines. Pages making clinical claims about depression, ADHD, anxiety, insomnia, postpartum, all anonymous. Google’s quality rater guidelines for health content effectively require a named, credentialed reviewer per page. The fix is a one-line block: “Medically reviewed by [Name, Credentials] on [Date].” The CMS work to support it is half a day. The trust signal it generates is enormous.

No source citations. Condition pages assert clinical claims with zero links to NIMH, NIH, CDC, AAP, APA, or peer-reviewed studies. This is free. It is also the cheapest way to prime an LLM to pull a quote from your page when somebody asks ChatGPT about ADHD treatment options. AI engines surface citation-rich pages because their own outputs need citations.

No team page worth the name. The “About” hub on most of these sites is around 200 words of marketing copy and a stock photo. The brand claims a roster of clinicians. None of them are named on the brand’s own About page. Often you can find provider names in a homepage testimonial block, or scattered on individual booking pages, but there is no canonical team grid. This is the credibility leak that competitors close in a week and turn into a moat.

The fix list, ordered by ROI

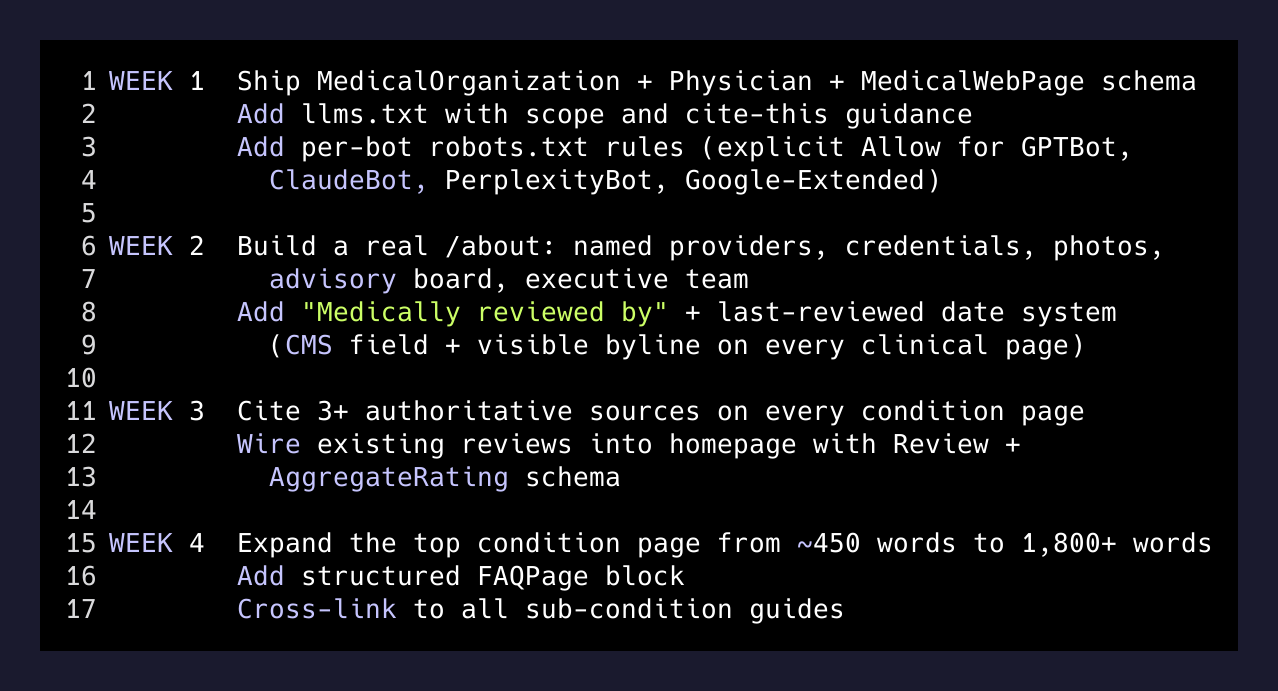

This is the thirty-day deck I write after every audit. Same shape, different brand.

WEEK 1 Ship MedicalOrganization + Physician + MedicalWebPage schema

Add llms.txt with scope and cite-this guidance

Add per-bot robots.txt rules (explicit Allow for GPTBot,

ClaudeBot, PerplexityBot, Google-Extended)

WEEK 2 Build a real /about: named providers, credentials, photos,

advisory board, executive team

Add "Medically reviewed by" + last-reviewed date system

(CMS field + visible byline on every clinical page)

WEEK 3 Cite 3+ authoritative sources on every condition + symptom page

Wire existing reviews into homepage with Review + AggregateRating

schema (most brands have real review volume that is invisible

to crawlers)

WEEK 4 Expand the top condition page from ~450 words to 1,800+ words

Add structured FAQPage block

Cross-link to all sub-condition guidesEvery item on that list is executable. Every item moves a measurable signal. Most of the items are a half-day of work. The reason it does not happen is not that it is hard. The reason is that the people running these brands are spending all of their attention on patient acquisition velocity and none on the substrate that makes the AI engines decide they are the real authority.

Why this is going to matter more, not less

Google’s December 2025 update extended E-E-A-T to all competitive queries, not just YMYL. AI Overviews are now stable across health queries and they pull from sites that look authoritative to the citation engine. ChatGPT’s web mode and Perplexity have been live long enough that brand search is no longer “did they rank in the ten blue links” but “did the model cite them when somebody asked it for a recommendation.”

The brands that figure out the schema layer in 2026 are going to look, to a citation engine, like the only legitimate options in their category. Their less-rigorous competitors will still exist, will still run ads, will still buy clicks. They just will not be the ones quoted when somebody asks an LLM “where should I get telehealth treatment for ADHD.”

This is the bet I am making with the next round of clients I am taking. Schema, bylines, and named clinicians are not nice-to-have polish. They are the spec for whether you exist in the next layer of search.

The audit takes thirty minutes. The thirty-day fix list is a calendar’s worth of execution. The brands that ship it first do not lose this market. The brands that keep treating their About page as decoration do.

If your team is sitting on a telehealth, mental-health, or YMYL-adjacent site and you have not run this pass on yourself recently, run it. The gaps will be there. The fix list will be the same. The clock on getting it shipped before your competitors do is the only thing that varies.